|

12/3/2023 0 Comments Decision tree entropy #If none of the above holds true, grow the tree!.#the mode target feature value is stored in the parent_node_class variable.#the direct parent node is that node which has called the current run of the ID3 algorithm and hence.#If all target_values have the same value, return this value.#Define the stopping criteria -> If one of this is satisfied, we want to return a leaf node#.def ID3(data,originaldata,features,target_attribute_name= "class" ,parent_node_class = None ):.Information_Gain = total_entropy - Weighted_Entropy.Weighted_Entropy = np.sum(/np.sum(counts))*entropy(data.where(data=vals).dropna()) for i in range(len(vals))]).vals,counts= np.unique(data,return_counts= True ).#Calculate the values and the corresponding counts for the split attribute.#Calculate the entropy of the total dataset.def InfoGain(data,split_attribute_name,target_name= "class" ):.entropy = np.sum(/np.sum(counts))*np.log2(counts/np.sum(counts)) for i in range(len(elements))]).elements,counts = np.unique(target_col,return_counts = True ).dataset=dataset.drop( 'animal_name' ,axis= 1 ).#We drop the animal names since this is not a good feature to split the data on.'breathes', 'venomous', 'fins', 'legs', 'tail', 'domestic', 'catsize', 'class' ,]) #Import all columns omitting the fist which consists the names of the animals.'airbone', 'aquatic', 'predator', 'toothed', 'backbone' ,.#Import the dataset and define the feature as well as the target datasets / columns#.Branch - The lines connecting elements are called "branches".

Short, or branch node) is any node of a tree that has child nodes

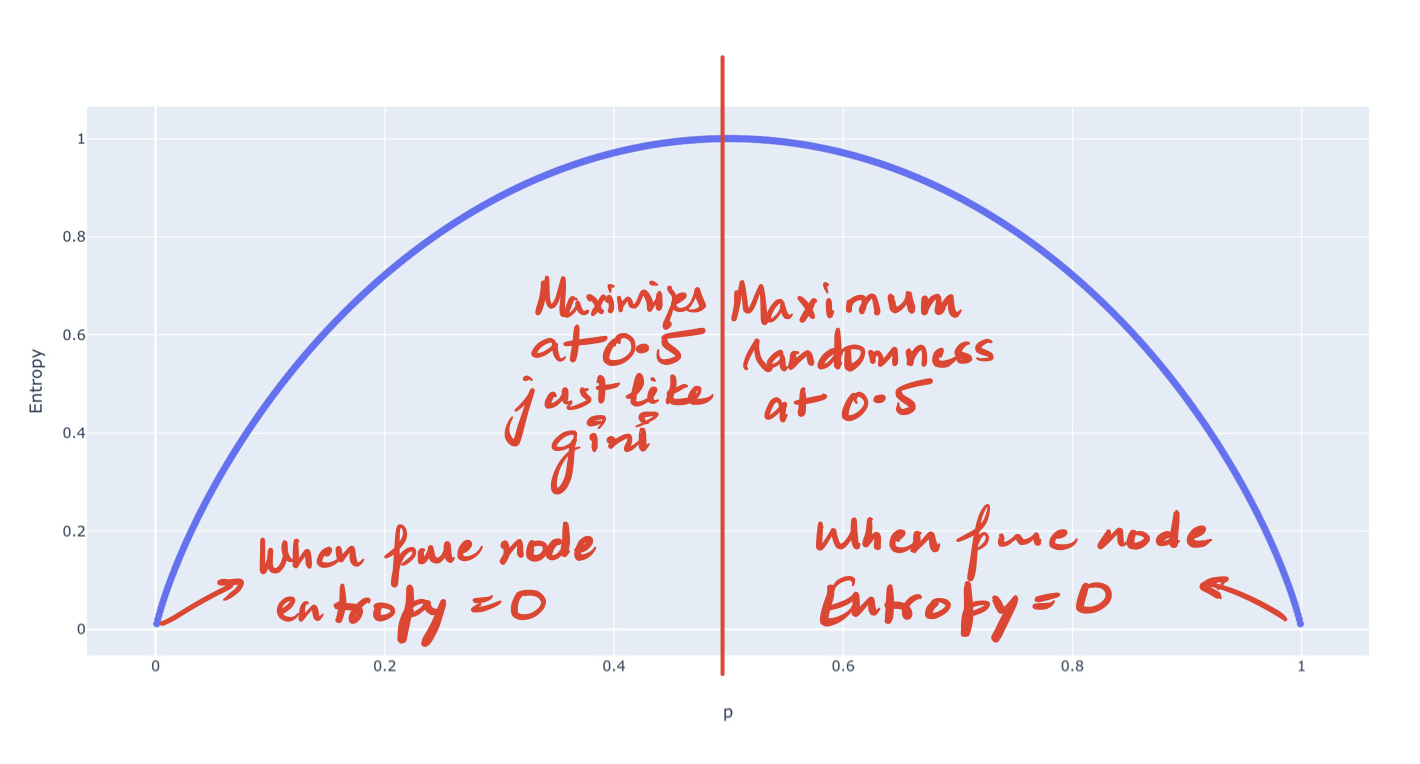

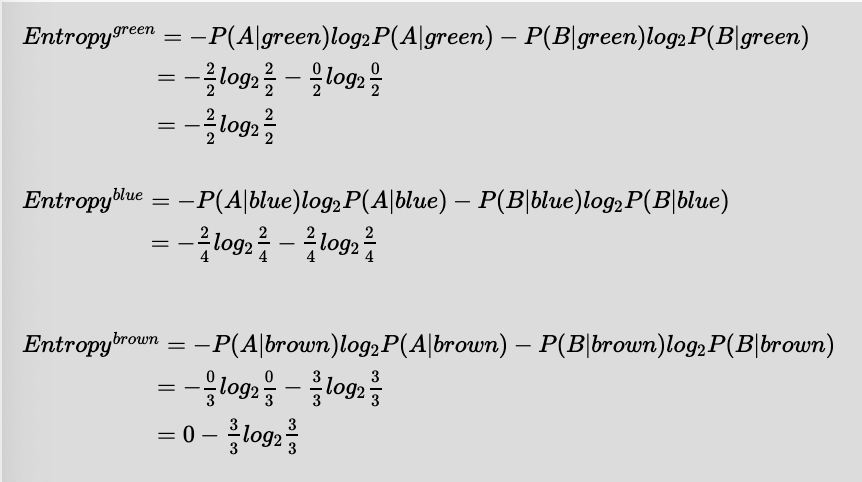

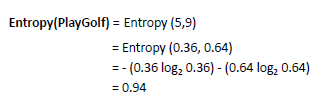

Internal Node - An internal node (also known as an inner node, inode for.Constructing a decision tree is all about finding an attribute that returns the highest information gain (i.e., the most homogeneous branches). Information Gain - The information gain is based on the decrease in entropy after a dataset is split on an attribute.If the sample is completely homogeneous the entropy is zero and if the sample is equally divided it has an entropy of one. ID 3 algorithm uses entropy to calculate the homogeneity of a sample.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed